“It ain’t what you don’t know that gets you into trouble. It is what you know for sure that just ain’t so” – Mark Twain.

On September 13th, 2018 at 4:00 PM in Lawrence Massachusetts, a major accident happened. This is only a few miles away from where I work at the AW Chesterton’s world headquarters in Groveland. This event affected many of our workers who live in the area. It was an accident that focuses on tribal knowledge and the failure of imagining the unexpected.

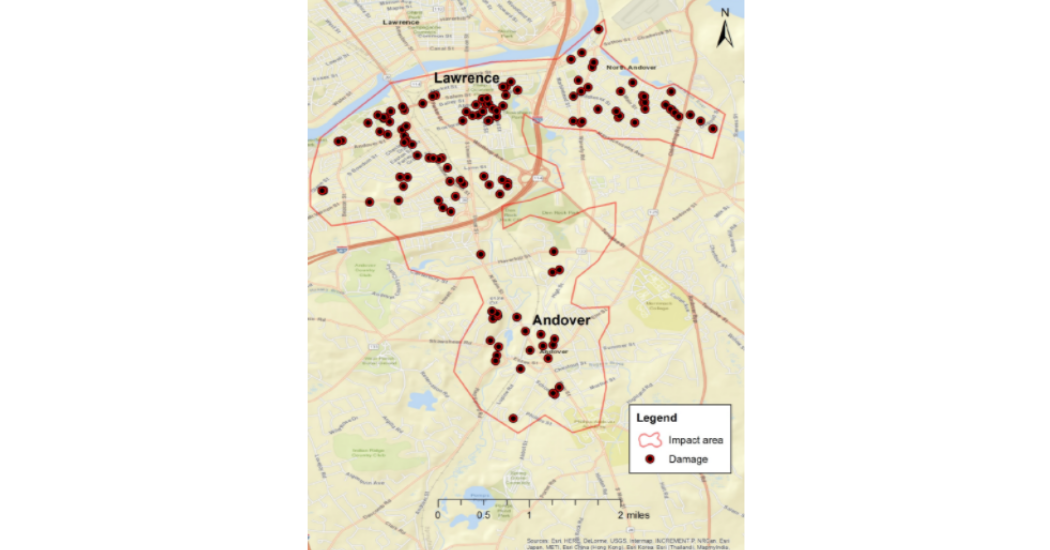

What happened that day was a mistake that caused one person to be killed and 22 people hospitalized. The fires and explosions damaged 131 structures, including at least 5 homes that were destroyed in the city of Lawrence and the towns of Andover and North Andover. Most of the damage occurred from fires ignited by natural gas-fueled appliances; several of the homes were destroyed by natural gas-fueled explosions.

To understand this accident, you need to know a few basics about gas pipelines. A regulating valve is simply a device that has a “pressure set point” and a “sensing” pressure input. With this information, the valve either opens a small amount, closes a small amount, or stays in the same position. If the pressure sensing line is “lower” than the set point, the valve opens more to raise the pressure in the line to reach the set point. If the sensing line is “higher” than the set point, the valve closes more to lower the pressure. If the pressure in the sensing line is at (or within a small tolerance) of the setpoint, the valve stays at that open position.

Image from NTSB report accident report PB2019-101365

On that day in September 2018 workers were installing an upgrade to a natural gas line. The workers were trained on this work and had no effects of drugs or stress stated in the NTSB report. But the documentation on the job at hand was not complete and somehow the workers were convinced that a “sensing” line was connected to the right pipe for the valve in the system. Because of this conviction, when they pressurized the new set of piping they could not believe what they were seeing. The valve “sensing” line was actually hooked up to a pipe that was not pressurized so it relayed a low pressure reading to the valve. This caused the valve to keep opening even though the actual pressure in the line was getting higher and higher beyond the limits of the pipe and homes.

In industrial settings there are many accidents that happen not because the training was not well executed but because it was limited to a linear type of logic. For example, A + B =C but what happens when you do the math and it equals D? It is important to review training programs to focus on the larger possibility that what you are seeing could be a black swan event. The metaphor of Black Swan refers to unpredictable events, such as September 11, 2001, that happens from time to time and have enormous consequences. The phrase originated in medieval Europe but has become widely known after Nassim Nicholas Taleb’s bestseller, The Black Swan: The Impact of the Highly Improbable. Even though people think of financial types of Black Swan events, it can also be seen in industry when unverified information can be taken as fact when it was not.

Another example of this type of wrongfully believing has to do with Gary Hoy. Besides being a lawyer, Gary was also a professional engineer before entering law school. Back in 1993 in Toronto, Mr. Hoy was meeting with prospective Law Students for his firm in a downtown skyscraper on the 24th floor. He liked to do a stunt to show off the unbreakable glass of the building by running full speed at the glass and bouncing off it. This demonstration highlighted his engineering background and really captured people’s attention. On this day, he repeated the same trick he had done many times before in the main conference room of the firm but this time the frame gave way and he fell to his death. The investigation determined that the frame was not designed for that type of force from the inside and time after time of the striking of the window by Mr. Hoy caused small undetectable stress cracks in the frame. This story highlights how smart people can fall into a Dunning – Kruger effect where someone can be overconfident when they only have a simple idea of how things work. Mr. Hoy’s basic engineering knowledge of “unbreakable” glass did not take into consideration metal fatigue and it costs him his life.

Another part of the failure in Lawrence MA was trust vs reality. The work on the gas lines was a continuation from a year and ½ delay and the workers were not the same as the ones previous. There was a lack of knowledge of the situation and good documentation that made the workers trust that the sensing line was connected in the right place when it was not. As Mark Twain said, someone believed the situation was correct when it just was not so.

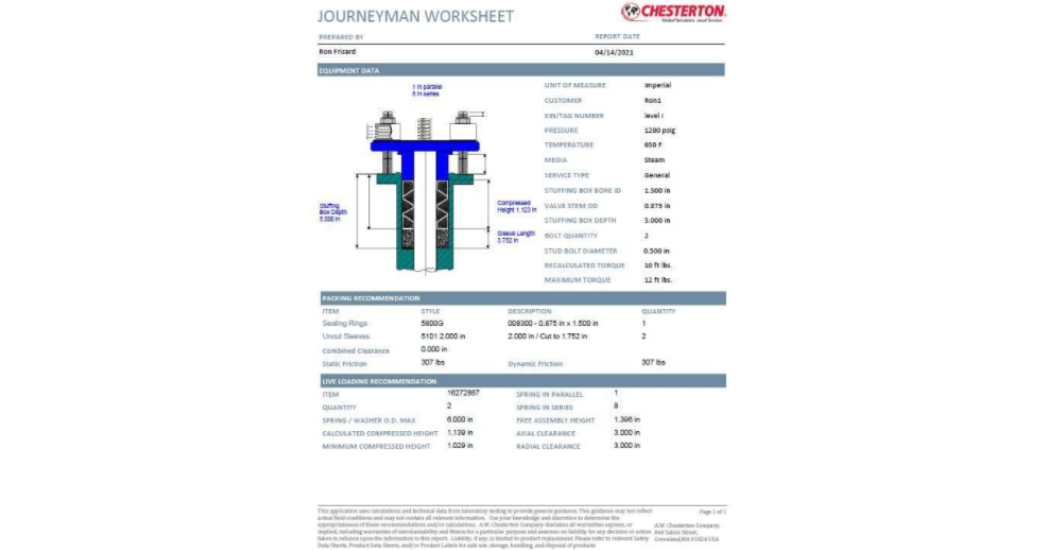

Chesterton’s Journeyman worksheet

Also part of this story is how better transparency between Engineering and the installation workers on the ground could have averted such a catastrophe. This situation can happen in industrial Maintenance also. Why tribal knowledge is such an issue has to do with incomplete documentation. Sometimes confusion with trusting perceived knowledge and not confirming information can lead to disaster. This has been shown in many situations including valve packing. One example is having the correct packing size of the valve so it is sealed correctly. Using the wrong size can create a leak that can cause fire and environmental harm. AW Chesterton has been focused on using software to ensure installers have the correct and latest information they need when they are installing valve packing. The software tool provides a 1-page document called a journeyman worksheet that has all the information in one place including packing and valve dimensions, torque values needed, and live loading information. This software can be accessed anywhere by both engineering and maintenance since it is in the cloud offering 24 /7/365 access. Another step to make sure there is a clear line from all groups on ensuring the valve data is in the correct place.

Also when training for industrial maintenance it is important to look for the unexpected. This can be done by using scenario-based training where putting students in situations that could be giving conflicting data. This type of training focuses on not getting trapped in the trust vs verify with what they are looking at. This type of training is used heavily in aviation to expect a clear head when looking at stimuli and not fall into any confirmation bias. Training based on a rigorous and lifetime learner strategy can help make sure when faced with black swans the clearest of heads can limit the outcome.

Ron Frisard

Ron Frisard has spent 30 years as a technical expert in Mechanical Packing and Industrial Gasketing. Ron graduated from Northeastern University in Boston with a degree in Mechanical Engineering Technology. Before joining Chesterton, Ron worked for Newport News Shipbuilding. He was a design engineer on the propulsion systems for aircraft carriers. He has worked for the A.W. Chesterton Company for the last 30 years in all facets of Mechanical Packing and Gasketing. Ron is currently Global Training Manager for AW Chesterton. He manages the design, development, analysis, and execution of training for the company.

Ron Frisard has spent 30 years as a technical expert in Mechanical Packing and Industrial Gasketing. Ron graduated from Northeastern University in Boston with a degree in Mechanical Engineering Technology. Before joining Chesterton, Ron worked for Newport News Shipbuilding. He was a design engineer on the propulsion systems for aircraft carriers. He has worked for the A.W. Chesterton Company for the last 30 years in all facets of Mechanical Packing and Gasketing. Ron is currently Global Training Manager for AW Chesterton. He manages the design, development, analysis, and execution of training for the company.